How to build and deliver an effective data strategy: part 2

In our previous blog we took a detailed look at the importance of building a data strategy. We started laying out the steps to build a successful strategy that builds a data-driven culture and business innovation. In part 2, we’re focussing on five key steps that will help you build an effective data strategy, from building a capability model, starting your journey on the maturity curve, focusing on products and services, and tools and technology that will provide dividends all along that journey.

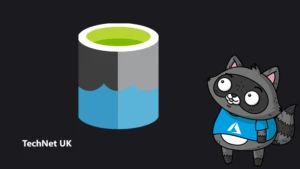

1. Building a capability organisation-wide and project-wide

Now that you have your key projects mapped with business outcomes and calibrated with impact and complexity, and your baseline, you can start looking at building the capability to deliver them.

The first step would be to look at all capabilities you need, either at an organisational level holistically, or at a project level. Start by mapping what you have.

Figure 1: Exercise 1, assessing current capability.

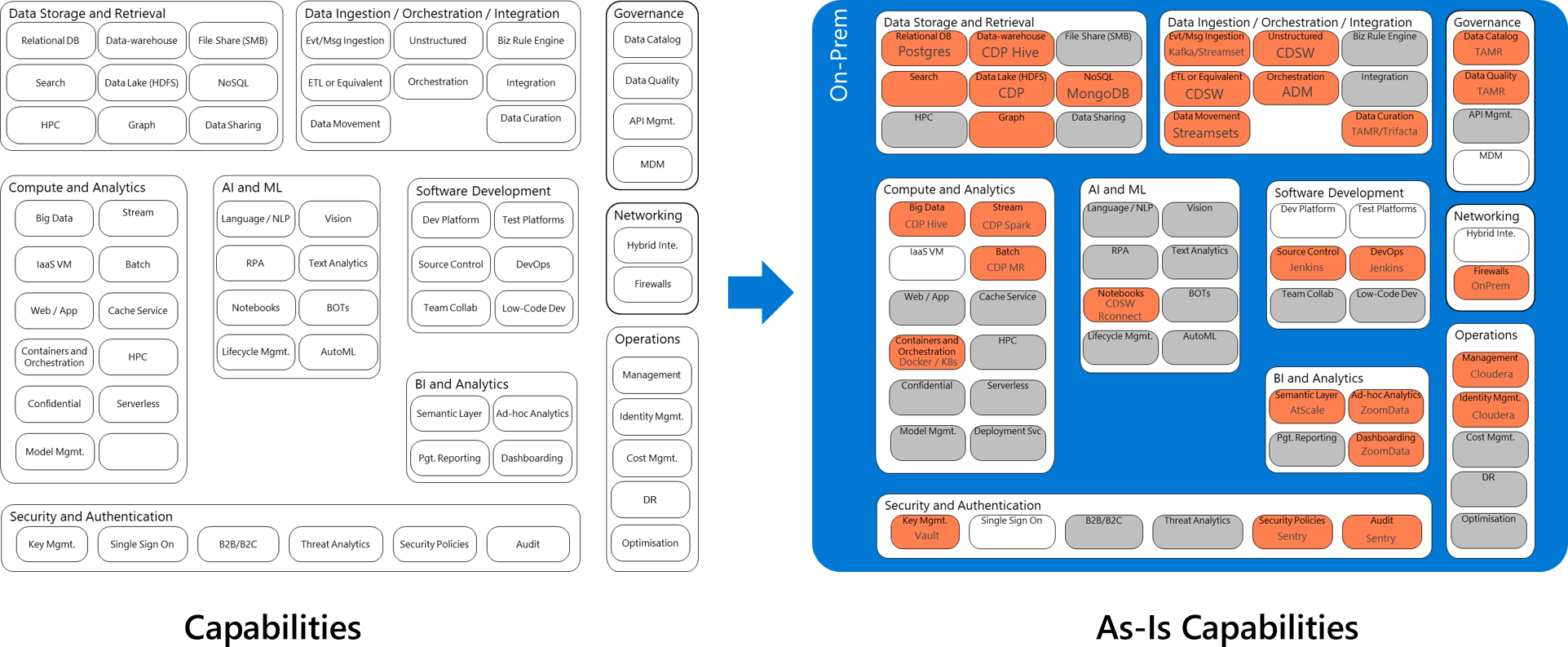

As a next step, look at Azure native services, and start mapping what you need to deliver success.

Figure 2: Exercise 2, mapping to cloud native capabilities.

2. Culture is a key part of a successful data strategy

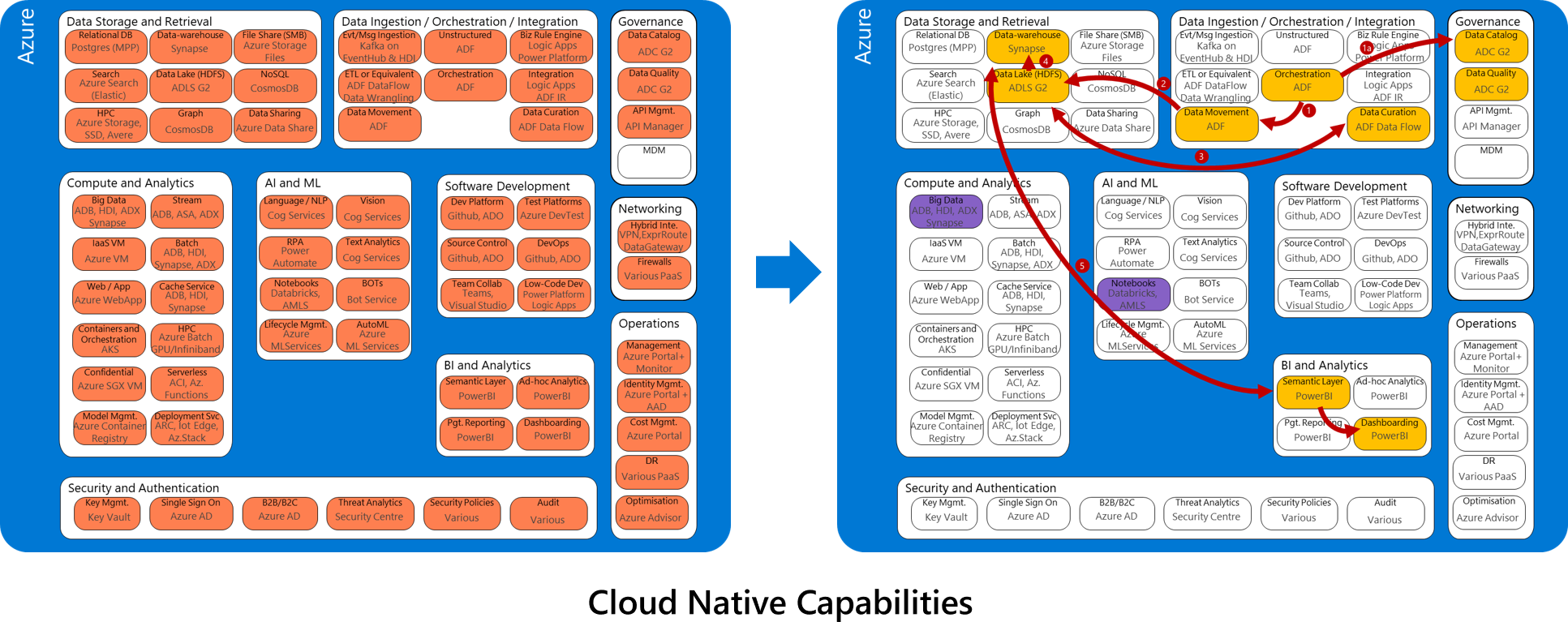

To build a successful data strategy, you need a data-driven culture. One that fosters open, collaborative participation consistently. This is so the entire workforce can learn, communicate and improve the organisation’s business outcomes. It will also improve an employee’s own ability to generate impact or influence, backed by data. Where you start on the journey will depend on your organisation, your industry, and where you in the maturity curve. Let’s look at what a maturity curve looks like:

Level 0

Data is not exploited programmatically and consistently. The data focus within the company is from an application development perspective. On this level, we commonly see ad-hoc analytics projects. Additionally, each application is highly specialised to the unique data and stakeholder needs. Each has significant code bases and engineering teams, with many being engineered outside of IT as well. Finally, use case enablement – as well as analytics – are very siloed.

Level 1

Here, we see teams being formed, strategy being created, but analytics still is departmentalised. At this level, organisations tend to be good at traditional data capture and analytics. They may also have a level of commitment to cloud-based approaches; for example, they may already be accessing data from the cloud.

Level 2

The innovation platform is almost ready, with workflows in place to deal with data quality, and the organisation is able to answer a few ‘why’ questions. At this level, organisations are actively looking for an end-to-end data strategy with centrally governed data lake stores controlling data store sprawl and improving data discoverability. They are ready for smart and intelligent apps that bring compute to the centrally governed data lake(s), reducing the need for federated copies of key data, reducing GDPR and privacy risks as well as reducing compute costs. They are also ready for multi-tenantable ,centrally hosted shared data services for common data computing tasks and recognise the value of this to enable the speed of insights from data science driven Intelligence Services.

Level 3

Some of the characteristics of this level are a holistic approach to data and projects related to data being deeply integrated with business outcomes. We would also see predictions being done using analytics platforms. At this level, organisations are unlocking digital innovation from both a data estate and application development perspective. They have the foundational data services including data lake(s) and shared data services in place. Multiple teams across the company are successfully delivering on critical business workloads, key business use cases, and measurable outcomes. Telemetry is being utilised to identify new shared data services. IT is a trusted advisor to teams across the company to help improve critical business processes through the end-to-end trusted and connected data strategy.

Level 4

Here we see the entire company using a data-driven culture, frameworks and standards enterprise. We also see automation, centres of excellence around analytics and/or automation, and data-driven feedback loops in action. One of the outcomes of a data-driven culture, is the use of AI in a meaningful way, and here it is easy to define a maturity model as the one shown below.

Figure 3: Maturity evolution of organization across reporting, deriving insights & decision support.

3. Focus on architecture

When considering every data product or service, it’s important to focus on the architectural principals. Think about whether you want to continue to manage and maintain your current service or products, or undertake new ones. The five architectural constructs are detailed in the Azure Well Architected Framework and summarised below.

1. Security

This is about the confidentiality and integrity of data, including privilege management, data privacy and establishing appropriate controls. For all data products and services, consider network isolation, end-to-end encryption, auditing and polices at platform level. For identity, consider single sign on integration, multi-factor authentication backed conditional access and managed service identities. It is essential to focus on separation of concerns, such as control pane versus data place, role-based access control (RBAC), and where possible, attribute-based access control (ABAC). Security and data management must be baked into the architectural process at layers for every application and workload. In general, set up processes around regular or continuous vulnerability assessment, threat protection and compliance monitoring.

2. Reliability

Everything has the potential to break and data pipelines are no exception. Hence, great architectures are designed with availability and resiliency in mind. The key considerations are how quickly you can detect change, and how quickly you can resume operations. When building your data platform, consider resilient architectures, cross region redundancies, service level SLAs and critical support. Set up auditing, monitoring, and alerting by using integrated monitoring, and a notification framework.

3. Performance efficiency

User delight comes from the architectural constructs of performance and scalability. Performance can vary based on external factors. It is key to continuously gather performance telemetry and react as quickly as possible, i.e. using the architectural constructs for management and monitoring. The key considerations here are storage and compute abstraction, dynamic scaling, partitioning, storage pruning, enhanced drivers, and multi-layer cache. Take advantage of hardware acceleration such as FPGA network where possible.

4. Cost optimisation

Every bit of your platform investment must yield value. It is critical to architect with the right tool for the right solution in mind. This will help you analyse spend over time and the ability to scale out versus scale in when needed. For your data and analytics platform, consider reusability, on-demand scaling, reduced data duplication and certainly take advantage of the Azure advisor service.

5. Operational excellence

This is about making the operational management of your data products and service as seamless as possible through automation and your ability to quickly respond to events. Focus on data ops though process automation, automated testing, and consistency. For AI, considering building in a MLOps framework as part of your normal release cycle.

4. Tools and technology to power your data strategy

The right set of tools and technologies will be the backbone for your data products and services. Here are some of the key considerations to take.

Do not get stuck in a never-ending learning or design loop, otherwise known as analysis paralysis, or building PoC after PoC. Beyond a certain point, additional time spent in this cycle does not add equivalent value to your organisation’s business objectives.

5. Think big, start small, and act fast.

Even if you don’t have 100 percent of the features from the get-go, it is more important to get started in delivering business value iteratively. Leave the rest to product innovation from vendors and the capability you going to build with each iteration. Growth mindset is cultivated best when we accomplish more with less. This balance is an art, it fosters creativity and innovation.

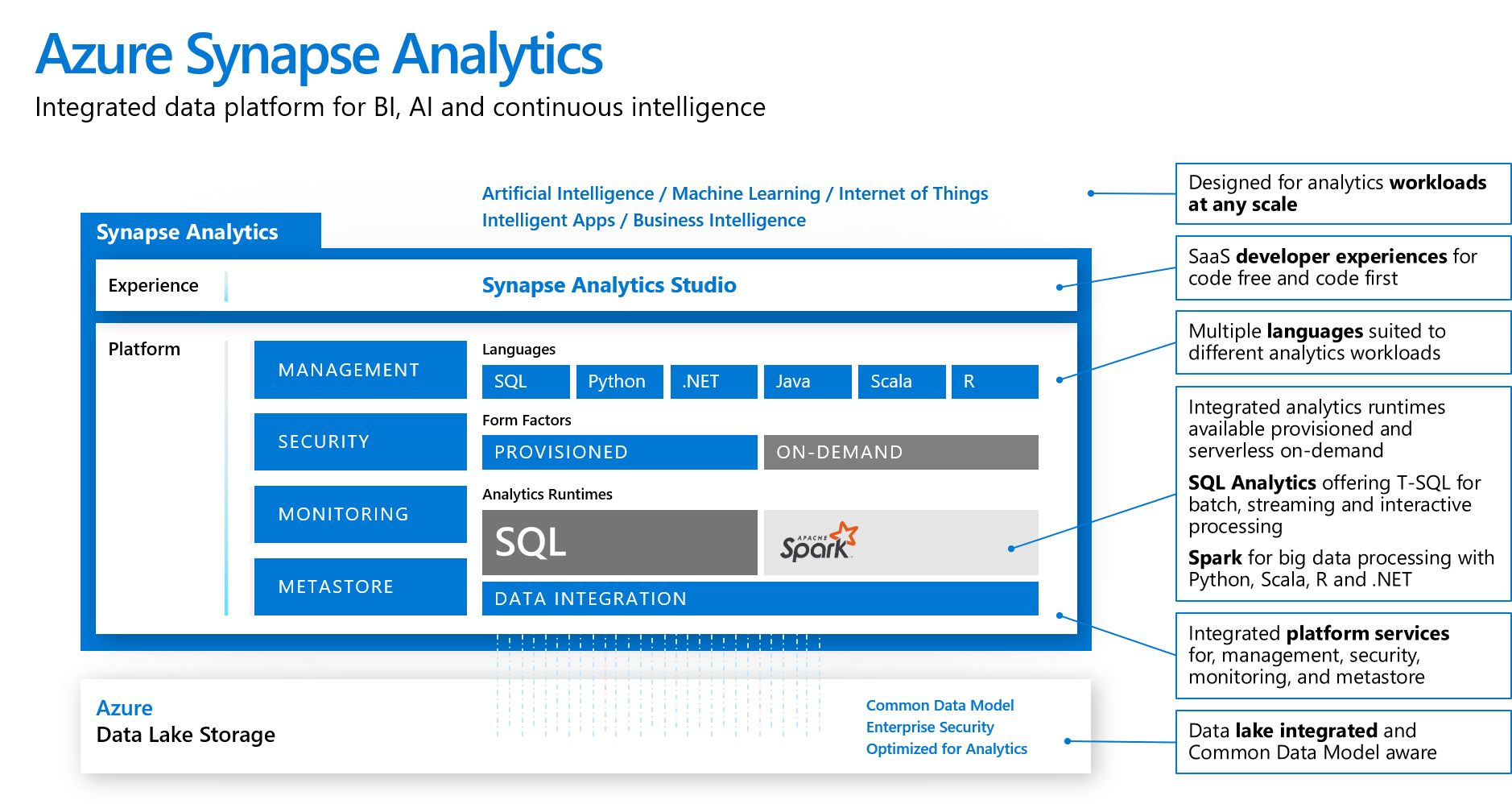

Simplification is key. At Microsoft, we have been innovating on behalf of our customers. We have many services for data procurement and many more for storage – depending on the volume, variety, velocity, and veracity. Similarly, an array of services for analytics, visualisation, and data science. Despite the flexibility and options, we understand that simplicity is important. A holistic solution that you can get started with immediately makes it easy to see return of investment quicker. For example, we see Azure Synapse Analytics as a category in its own right. It has ample integration options in your current estate, as well as ISV solutions.

In a nutshell, what we have created is a single integrated platform for BI, AI and Continuous Intelligence. This is wrapped under four foundational capabilities of: management, security, monitoring and a metastore. Underpinning this is a decoupled storage layer, data integration layer, analytics runtimes (either on-demand as serverless, or provisioned). The runtimes provide choice, such as SQL with T-SQL for batch and interactive processing, or Spark for big data, and support of most languages such as SQL, Python, .NET, Java, Scala and are all made available through a single interface called Synapse Analytics Studio.

Figure 4: Azure Synapse Analytics for integrated data platform experience for BI, AI and continuous intelligence.

The start of a successful journey to a modern data strategy

This principled approach will help you shift from an application-only approach to an application and data-led approach. This will help your organisation build an end-to-end data strategy that can ensure repeatability and scalability across current and future use cases that impact business outcomes. Our final blog in this three part series takes a deep dive into how you can execute your data strategy successfully.

[msce_cta layout=”image_center” align=”center” linktype=”blue” imageurl=”https://www.microsoft.com/en-us/industry/blog/wp-content/uploads/sites/22/2020/09/PreviewImage-2.png” linkurl=”https://www.microsoft.com/en-gb/industry/blog/?p=40439&preview=true” linkscreenreadertext=”How to build and deliver an effective data strategy: part 3″ linktext=”How to build and deliver an effective data strategy: part 3″ imageid=”40718″ ][/msce_cta]

Find out more

How to build and deliver an effective data strategy: part 1

Explore the Azure Well Architected Framework

Driving effective data governance for improved quality and analytics

Designing a modern data catalog at Microsoft to enable business insights

Download: A Guide to Data Governance

Resources for your development team

Encourage your developers to explore modern data warehouse analytics in Azure

About the author

Pratim Das is the Director of Data & AI Architecture and CDO Advisory at Microsoft UK. Pratim and his team’s mission is to work alongside their customers in delivering insights and most importantly value from data, in achieving great business outcomes. Be it retail, financial services, manufacturing, health care or public sector, they have industry knowledge, and deep domain expertise to build a resilient data culture, and customer capability. Pratim’s special interests are around operational excellence for petabyte scale analytics, and design patterns covering “good data architecture” including governance, catalogue, privacy and data democratisation in a secure and compliant manner. Pratim brings over 20 years of experience both as a customer, and also working as a technology vendor building Data & AI services, with a key focus on building capability, products and solutions, that leads into fostering a data driven culture.

Pratim Das is the Director of Data & AI Architecture and CDO Advisory at Microsoft UK. Pratim and his team’s mission is to work alongside their customers in delivering insights and most importantly value from data, in achieving great business outcomes. Be it retail, financial services, manufacturing, health care or public sector, they have industry knowledge, and deep domain expertise to build a resilient data culture, and customer capability. Pratim’s special interests are around operational excellence for petabyte scale analytics, and design patterns covering “good data architecture” including governance, catalogue, privacy and data democratisation in a secure and compliant manner. Pratim brings over 20 years of experience both as a customer, and also working as a technology vendor building Data & AI services, with a key focus on building capability, products and solutions, that leads into fostering a data driven culture.