Reduce cloud costs by adopting a “Pay as You Go” mindset – Part 1

One of the great benefits of adopting cloud is often quoted as “scalability”. People tend to automatically think in terms of having the ability to grow, but it also enables you to shrink.

The cloud offers many “Pay as You Go” (PAYG) offerings – for example for Azure Virtual Machines we charge by the minute. This is great for bursts, but let’s look at it another way – for every minute that you are running a Virtual Machine with excess capacity, you’re spending more than you need. For services such as Azure Container Instances, Functions and Spring Cloud, we charge by the second.

Customers should adopt a pure “Pay as You Go” mindset as this frees up concerns around committing to expensive mistakes and allows you to experiment, ultimately driving a cost culture and bringing flexibility into your deployments.

Azure Advisor has recommendations to help you reduce costs amongst other areas which roll up to your Advisor Score. By building the right governance and reporting models, you can take this as one of the data points to gamify and demonstrate how efficiently different teams are running. Azure Cost Management enables you to set up budgets and should form another part of your toolkit. You should leverage Azure Policy to apply controls, such as limiting very expensive SKUs to only be deployed where needed.

Beyond our tooling, Microsoft also has the Well Architected Framework, which includes Well Architected Reviews to provide a simple series of questions to support you in improving your capabilities across the five pillars: cost management, operational excellence, performance efficiency, reliability, and security.

Whilst it is generally true that we should also look towards Platform-as-a-Service (also a great fit for PAYG mindset!) and Software-as-a-Service offerings to enjoy even greater benefits, there are still – and will be for a while – many Virtual Machines that we need to run efficiently. The goal of this two part series is to show how bringing a Pay as You Go mindset can help you save money every minute, and contribute to a cultural shift to automation and being cost aware.

Capacity – sizing

Sizing components is traditionally a difficult balancing act of judging anticipated growth and performance requirements over a number of years vs. spending too much on expensive hardware that may go under-utilised. In reality, two things will normally occur:

- Either the hardware is under-spec’d and has to be upgraded/replaced within its write-down period, or as is most often the case-

- It is massively over specified and largely idle.

There is an opportunity cost to the business here where capital (or OPEX in cloud), which could have been deployed elsewhere, is instead being needlessly deployed to provide insurance to the project and operations teams.

In the cloud, your planning horizon should be much shorter (days or hours) and it isn’t a one-off exercise. With a PAYG mindset, it is a constant evaluation of ensuring you are getting the maximum value for your infrastructure costs.

Good – Right-Sizing & Tight-Sizing

Right-sizing is typically thought of as a one-off exercise done as part of a migration to the Cloud. In reality, it should be a continuous and ongoing process. Azure Advisor makes recommendations on identifying under-utilised and idle resources. For more traditional workloads which don’t easily consume more/less resources, regular sizing reviews can ensure you continue to have the best fit for your workload.

Where right-sizing may typically be thought of for machines which are already running, with tight-sizing we are bringing similar concepts but applying them to the original deploy phase. When you first deploy your workload, size it for the expected workload in the near-time, don’t size it for next year’s growth. If you are deploying a very large workload as you do your acceptance and go-live testing, you are able to rapidly scale up or down the machine as required – don’t go big to be safe and never review it.

When sizing your instances do not lose sight of the importance of selecting the correct disk type. We have a range of disks from traditional standard Hard Disks, up through standard and premium solid state disks, as well as our Ultra disk. In selecting the best disk type for your workload don’t focus on cost or performance alone – think about the impact on any Service Level Agreement from Microsoft too. For example, our SLA on single instance Virtual Machines varies by disk type. Across dev and non-production, do you need anything more performant or with a higher SLA than standard hard disks?

We have seen customers with potential savings of over $1m per annum, just from right-sizing their estate as part of migrations.

Better – Smart Capacity

Many traditional workloads will not benefit from adding extra servers etc. to handle load, or perhaps there are licencing conditions which make this an unattractive option. Leveraging the automation capabilities of Azure, you can potentially realise savings through resizing your infrastructure to meet known usage patterns during the month. The simplest example of this is of course reporting servers. Generally they tick along for the bulk of the month, but at month end they will be hugely loaded. As part of your right-sizing effort you may have sized the box to the best value option to handle this peak (as you should), but what if you could have two sizing profiles – one for normal usage and another for month-end? Rather than jump straight to fully automated re-sizing, you could start by manually re-sizing the server – perhaps under a standard change, and as you grow in confidence you could move to a fully automated process.

In terms of pricing for these dual profiles, you could leverage instance size flexibility for Virtual Machine reservations – the illustration below explains this in more detail.

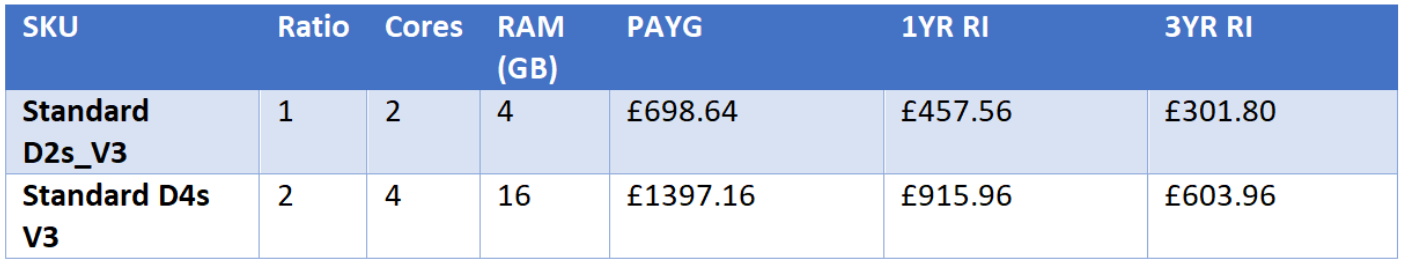

Let us assume we have a reporting server that for 7 days a month requires 4 cores and 16GB of RAM, whilst for the remaining days of a month 2 cores and 8GB of RAM is adequate. Using the Azure Pricing Calculator we can see the run costs of our instance options below:

If we take a 3-year reserved instance to cover the peak load we see at the end of each month, it will cost us £603.96 a year. If we instead take a 3 year instance size flexibility for the D2S, this will cost us £301.80 a year for the base compute level. The Pay as You Go cost on top would be £160.77, giving a total cost of £462.57 for the year – a saving of £141.39 per year. This is a simple example but if you are working with huge spikey workloads, the pool of available savings across your organisation can be substantial.

Initially this may seem like science fiction, but as you build greater capabilities and confidence in your automation it will become second nature. We have seen customers who have taken this approach and are re-sizing on a nightly basis based on the forecast for that day – they also look at upcoming changes such as software upgrades to boost power and reduce time to complete such operations – moving from static sizing shifted the cost from running a large workload from $5.5M a year to $3.1M a year – a saving of $2.5M a year.

Best – Auto-scale

Auto-scaling tends to be seen as the preserve of more modern architectures. If, however, your workload will happily run with instances turned off and use them if they are there (e.g., some form of load balancing), or you can script adding/removing instances, you too can benefit from scaling!

The key thing to bear in mind with scaling out (adding more workers, rather than making the worker bigger) is how long it takes from the virtual button being pressed until the instances can serve traffic. If your scaling process takes 30 minutes from triggering to serving load it probably lends itself to more of a schedule based auto-scaler – that is based on a schedule to add/remove instances. Should your scaling operation take a few minutes, metrics such as user count or CPU utilisation could be appropriate triggers.

If you are able to scale out (provision/deprovision compute) through auto-scaling you will save costs on the storage – where VMs are simply stopped you are still paying for the storage.

-=-

In this post we have described how adopting a Pay as You Go mindset can help organisations reduce costs for example changing the sizing of a Virtual Machine to support a workload from “How big a Virtual Machine do I need to order to support 3 years growth” to “How big a Virtual Machine do I need to support this Sales Cycle” or, even better, “How big a Virtual Machine do I need to support the demand today?”. In Part 2, we explore how a Pay as You Go Mindset can be applied to your run-time and Disaster Recovery (DR) processes.