Updated: Analysis Services – Roadmap for SQL Server Code Name “Denali” and Beyond

At the TechEd North America 2011 conference in Atlanta this week, the SQL Server team is providing details on the SQL Server “Denali” release, including the upcoming public availability of a Community Technology Preview (CTP) this summer. As part of these announcements, we are providing an update on the SQL Server Analysis Services roadmap that was disclosed during the PASS 2010 conference last November (detailed here). In the past six months, we have been talking to many people in the Microsoft BI community – customers, partners, developers, and MVPs – to get feedback on the roadmap. We are seeing lots of excitement about the breakthrough innovations coming in SQL Server “Denali” and the new class of BI applications that it will enable. At the same time, we have learned that we need to be clearer about how customers can carry forward their existing BI applications and benefit from these innovations. Based on this feedback from the community, we’ve made a couple of adjustments and clarifications to the roadmap and vision, outlined below.

Analysis Services is an industry leading BI platform and the most popular OLAP engine in the market (see Gartner Magic Quadrant for BI Platforms). It has a large ecosystem of developers, partners, BI tools, and solutions, and it has broad adoption from small businesses to large enterprises. It is the foundation of the Microsoft BI stack, serving as the semantic model for Microsoft BI tools including Excel, SharePoint Insights and Reporting Services. Our vision is to build on the strengths and success of Analysis Services, expand its reach to a much broader community, and enable the next generation of BI applications. We will do this by embracing the relational data model and bringing it together with the multidimensional model under a single unified BI platform – providing the best of both worlds. Analysis Services, by virtue of its OLAP roots, has tremendous strengths in multidimensional modeling which is capable of tackling the most advanced of BI applications. On the other hand, the relational data model is widely understood and accepted by developers and IT professionals around the world. By bringing the two data models together, we will provide a powerful yet flexible platform that can tackle the diverse needs of BI applications – needs such as advanced analytics, sophisticated business logic, professional developer tools, choice of end user tools, performance, scalability, ease of use, and time to solution. The Business Intelligence Semantic Model is being introduced in Analysis Services for SQL Server “Denali” with the goal to fulfill this vision.

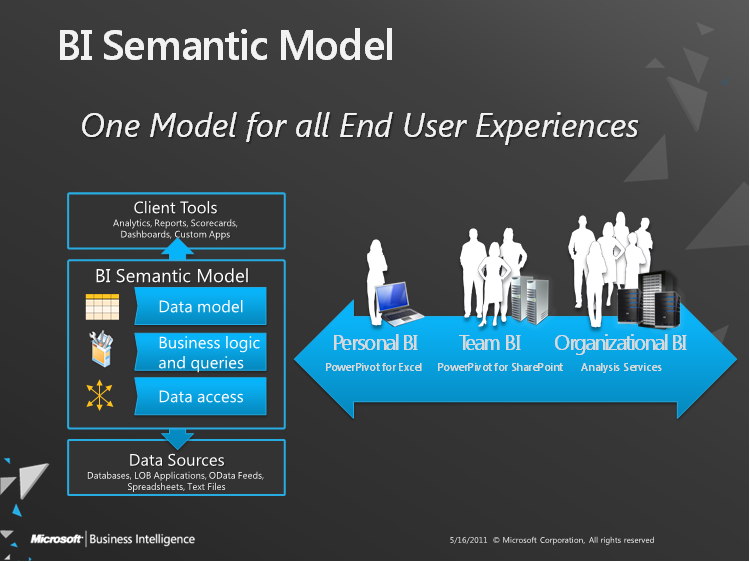

The BI Semantic Model is one model for all end user experiences – reporting, analytics, scorecards, dashboards, and custom applications. All client tools in the Microsoft BI stack – Excel, PowerPivot, SharePoint Insights and Reporting Services (including Crescent) – operate on this model. BI professionals can create the model in Visual Studio and deploy it to an Analysis Services server. Interestingly, the model can also be created by business users with PowerPivot for Excel and shared via PowerPivot for SharePoint. PowerPivot and Analysis Services are two different products; one targets business users and the other targets BI professionals and IT. However both use the BI Semantic Model under the covers. In the case of PowerPivot, the model is embedded inside an Excel workbook. In the case of Analysis Services, the model is stored and managed on the server. Having the same model behind these experiences enables seamless transition of BI applications from Personal BI to Team BI to Organizational/Professional BI. For example, a PowerPivot workbook built by a business user can be used as a starting point by a BI professional building an Analysis Services application. The SQL Server professional tools (Management Studio and BI Development Studio) make it very simple to import a PowerPivot workbook into Analysis Services.

Before we get into the details of the BI Semantic Model, let me first address an important question: What about existing Analysis Services cubes (also known as Unified Dimensional Model or UDM)? The answer is very simple: when you upgrade your Analysis Services project or server to SQL Server “Denali”, every cube automatically becomes a BI Semantic Model. The BI Semantic Model is an evolution of the UDM from a pure multidimensional model into a hybrid model that offers all of the capabilities of the UDM and more. As such, the term Unified Dimensional Model is no longer used with SQL Server “Denali” and is subsumed by the BI Semantic Model. This is a key difference in the roadmap from what was disclosed last November at PASS 2010.

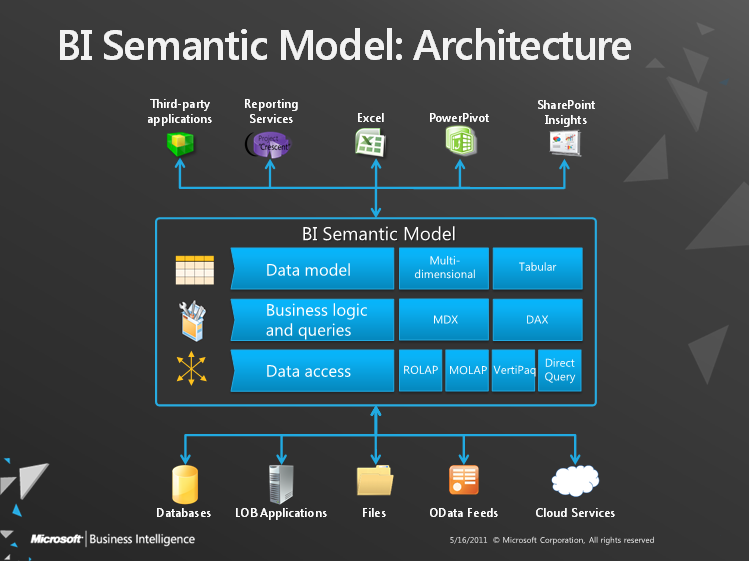

Now let’s get into the details of the BI Semantic Model – how to build a model and the technology that powers the model. The BI Semantic Model can be viewed conceptually as a three-layer model:

- Data Model: This is the conceptual data model that the model developer and the model consumer work with. The BI Semantic Model is a hybrid model supporting both multidimensional and tabular data modeling. The model developer can use BI Development Studio to create a BI Semantic Model using a multidimensional project or a tabular project. And the client tool can consume the model using a multidimensional or a tabular interface. Model developers will typically choose between multidimensional and tabular projects based on the needs of their application and their skill set. On the other hand, client tools will typically use either the multidimensional or tabular interface depending on the nature of the intended user experience. Rich analytical tools such as Excel will typically use the multidimensional interface while ad hoc reporting tools such as Crescent will use the tabular interface.

- Business Logic & Queries: This layer represents the intelligence or semantics in the model. Model developers can embed business logic in the model using MDX (Multidimensional Expressions) or DAX (Data Analysis Expressions) calculation languages. And client tools consuming the model can express additional business logic using MDX or DAX queries. MDX was pioneered by the Analysis Services team over the last 12 years and has become the BI industry standard for multidimensional calculations and queries. DAX is an expression language based on Excel formulas that was introduced in PowerPivot and built on tabular concepts. Again, model developers and client tools will choose between MDX and DAX based on application needs, skill set, user experience, etc.

- Data Access: This layer integrates data from multiple sources – relational databases, business applications, flat files, OData feeds, etc. There are two modes for retrieving and managing this data – cached and passthrough. The cached mode pulls in data from all the sources and stores it in a compressed data structure that is optimized for high speed data access. The passthrough mode pushes query processing and business logic down to the data source, thereby exploiting the capabilities of the source system and avoiding the need to copy the data. Obviously there is a tradeoff between the two modes and the model developer needs to make the choice based on application needs.

- There are two storage engines that can be used for caching the data – MOLAP and VertiPaq™. MOLAP is the storage engine that has been used in Analysis Services for many releases. It is optimized for OLAP and uses techniques such as pre-built aggregates, bitmap indexes, and compression to deliver great performance and scale. In SQL Server “Denali”, we are introducing the VertiPaq storage engine as an alternative to MOLAP. VertiPaq is an in-memory column store engine that combines state-of-art data compression and scanning algorithms to deliver blazing fast performance with no need for indexes, aggregates or tuning.

- There are two options for passthrough – ROLAP and DirectQuery. ROLAP is complementary to MOLAP while DirectQuery is complementary to VertiPaq. Both attempt to push query evaluation, as much as possible, down into the data source. ROLAP is optimized for large fact tables and relatively small dimension tables, while DirectQuery is mostly neutral towards the backend database structure (more on this in a later blog post).

As you can see, the BI Semantic Model is a rich, scalable, and flexible model. The richness lies in the sophisticated data modeling capabilities and complex business logic that can be expressed using MDX and DAX. The performance and scalability comes from the MOLAP and VertiPaq storage engines that enable data volumes all the way from a few megabytes up to multiple terabytes. Flexibility is probably the most interesting aspect of the model. Model developers can choose between the multidimensional and tabular data modeling experiences, between MDX and DAX for business logic, and between cached and passthrough modes for data access and storage. Regardless of the model developer’s choices, client tools see a single model and can consume it using the multidimensional or tabular interfaces and send MDX or DAX queries.

SQL Server “Denali” is the first release on the Analysis Services roadmap towards realizing the BI Semantic Model vision stated above. As such, there will be some limitations in this release in the flexibility we offer to model developers. BI Development Studio comes with two projects for building a BI Semantic Model – a multidimensional project and tabular project. The multidimensional project lets model developers use the multidimensional modeling experience along with MDX and MOLAP/ROLAP (this is what existing UDM projects get upgraded to). The tabular project lets model developers use the tabular modeling experience along with DAX and VertiPaq/DirectQuery. It is important to note that these restrictions in the two projects are not rigid or permanent; they could very well change in future releases based on customer requirements. For example, we could offer model developers VertiPaq as a storage option for multidimensional projects or MDX scripts for tabular projects. Another limitation in the upcoming CTP release is that models built using the multidimensional project will not support DAX queries (and thereby Crescent, which uses DAX to retrieve data from the model). We recognize that removing this restriction is very important for customers with existing Analysis Services solutions to be able to upgrade to SQL Server “Denali” and leverage Crescent. The product team is actively working on it and is committed to making this functionality available to customers.

Finally, I’d like to invite you to try out the upcoming Community Technology Preview (CTP) of SQL Server “Denali” and send us your feedback. The product team has been working very hard on it over the past year and we are excited to share it with you. You can check for the availability of the CTP by visiting http://www.sqlserverlaunch.com. In the upcoming months, we will be putting out blog posts and whitepapers that dive into the details of the BI Semantic Model and provide best practices and guidance on building and deploying models. The Microsoft BI community has been instrumental in helping us shape our product vision and strategy and we look forward to your close engagement and partnership as we look to redefine the BI landscape with these new innovations in SQL Server “Denali”.

T.K. Anand

Principal Group Program Manager

SQL Server Analysis Services