Breakthrough performance with in-memory technologies

In a blog post earlier this year on “The coming database in-memory tipping point”, I mentioned that Microsoft was working on several in-memory database technologies. At the SQL PASS conference this week, Microsoft unveiled a new in-memory database capability, code named “Hekaton1”, which is slated to be released with the next major version of SQL Server. Hekaton dramatically improves the throughput and latency of SQL Server’s transaction processing (TP) capabilities. Hekaton is designed to meet the requirements of the most demanding TP applications and we have worked closely with a number of companies to prove these gains. Hekaton’s technology adoption partners include financial services companies, online gaming and other companies which have extremely demanding TP requirements. What is most impressive about Hekaton is that it achieves breakthrough improvement in TP capabilities without requiring a separate data management product or a new programming model. It’s still SQL Server!

As I mentioned in the “tipping point” post, much of the energy around in-memory data management systems thus far has been around columnar storage and analytical workloads. As the previous blog post mentions, Microsoft already ships this form of technology in our xVelocity analytics engine and xVelocity columnstore index. xVelocity columnstore index will be updated in SQL Server 2012 Parallel Data Warehouse (PDW v2) to support updatable clustered columnar indexes. Hekaton, in contrast, is a row-based technology squarely focused on transaction processing (TP) workloads. Note that these two approaches are not mutually exclusive. The combination of Hekaton and SQL Server’s existing xVelocity columnstore index and xVelocity analytics engine, will result in a great combination.

The fact that Hekaton and xVelocity columnstore index are built-in to SQL Server, rather than a separate data engine, is a conscious design choice. Other vendors are either introducing separate in-memory optimized caches or building a unification layer over a set of technologies and introducing it as a completely new product. This adds complexity forcing customers to deploy and manage a completely new product or, worse yet, manage both a “memory-optimized” product for the hot data and a “storage-optimized” product for the application data that is not cost-effective to reside primarily in memory.

Hekaton is designed around four architectural principles:

1) Optimize for main memory data access: Storage-optimized engines (such as the current OLTP engine in SQL Server today) will retain hot data in a main memory buffer pool based upon access frequency. The data access and modification capabilities, however, are built around the viewpoint that data may be paged in or paged out to disk at any point. This perspective necessitates layers of indirection in buffer pools, extra code for sophisticated storage allocation and defragmentation, and logging of every minute operation that could affect storage. With Hekaton you place tables used in the extreme TP portion of an application in memory-optimized main memory structures. The remaining application tables, such as reference data details or historical data, are left in traditional storage optimized structures. This approach lets you memory-optimize hotspots without having to manage multiple data engines.

Hekaton’s main memory structures do away with the overhead and indirection of the storage optimized view while still providing the full ACID properties expected of a database system. For example, durability in Hekaton is achieved by streamlined logging and checkpointing that uses only efficient sequential IO.

2) Accelerate business logic processing: Given that the free ride on CPU clock rate is over, Hekaton must be more efficient in how it utilizes each core. Today SQL Server’s query processor compiles queries and stored procedures into a set of data structures which are evaluated by an interpreter in SQL Server’s query processor. With Hekaton, queries and procedural logic in T-SQL stored procedures are compiled directly into machine code with aggressive optimizations applied at compilation time. This allows the stored procedure to be executed at the speed of native code.

3) Provide frictionless scale-up: It’s common to find 16 to 32 logical cores even on a 2-socket server nowadays. Storage-optimized engines rely on a variety of mechanisms such as locks and latches to provide concurrency control. These mechanisms often have significant contention issues when scaling up with more cores. Hekaton implements a highly scalable concurrency control mechanism and uses a series of lock-free data structures to eliminate traditional locks and latches while guaranteeing the correct transactional semantics that ensure data consistency.

4) Built-in to SQL Server: As I mentioned earlier – Hekaton is a new capability of SQL Server. This lays the foundation for a powerful customer scenario which has been proven out by our customer testing. Many existing TP systems have certain transactions or algorithms which benefit from Hekaton’s extreme TP capabilities. For example, the matching algorithm in financial trading, resource assignment or scheduling in manufacturing, or matchmaking in gaming scenarios. Hekaton enables optimizing these aspects of a TP system for in-memory processing while the cooler data and processing continue to be handled by the rest of SQL Server.

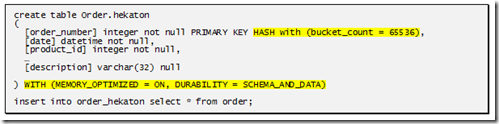

To make it easy to get started, we’ve built an analysis tool that you can run so you can identify the hot tables and stored procedures in an existing transactional database application. As a first step you can migrate hot tables to Hekaton as in-memory tables. Doing this simply requires the following T-SQL statements2:

While Hekaton’s memory optimized tables must fully fit into main memory, the database as a whole need not. These in-memory tables can be used in queries just as any regular table, however providing optimized and contention-free data operation at this stage.

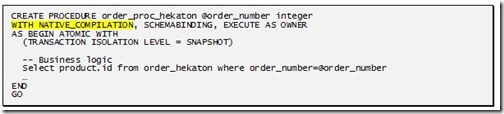

After migrating to optimized in-memory storage, stored procedures operating on these tables can be transformed into natively compiled stored procedures, dramatically increasing the processing speed of in-database logic. Recompiling these stored procedures is, again, done through T-SQL, as shown below:

What can you expect for a performance gain from Hekaton? Customer testing has demonstrated up to 5X to 50X throughput gains on the same hardware, delivering extreme TP performance on mid-range servers. The actual speedup depends on multiple factors, such as how much data processing can be migrated into Hekaton and directly sped up; and, how much cross transaction contention is removed as a result of the speed up and other Hekaton optimizations such a lock free data structures.

Hekaton is now in private technology preview with a small set of customers. Keep following our product blogs for updates and a future public technology preview.

Dave Campbell

Technical Fellow

Microsoft SQL Server

[1] Hekaton is from the Greek word ἑκατόν for “hundred”. Our design goal for the Hekaton original proof of concept prototype was to achieve 100x speedup for certain TP operations.

[2] The syntax for these operations will likely change. The examples demonstrate how easy it will be to take advantage of Hekaton’s capabilities.