Merge Operation in Memory-Optimized Tables

In the Storage Allocation and Management for Memory-Optimized Tables, we had briefly touched upon the Merge operation. This blog describes Merge operation in detail. We also recommend you to refer to implementing durability for memory-optimized tables for a good understanding of data/delta files and CFPs

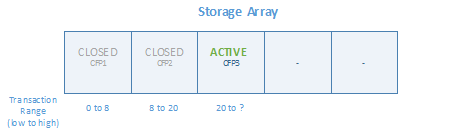

The metadata of all Checkpoint File Pairs (i.e. CFP) that exist in storage is stored in an internal array structure referred to as Storage Array. It is a finitely sized (4096 entries in CTP2 and 8192 entries in RTM) array of CFPs to support a cumulative size of 256GB for durable memory-optimized tables in the database. The entries in the storage array ordered by transaction range. The CFPs in the storage array (along with the tail of the log) represent all the on-disk state required to recover a database with memory-optimized tables.

The metadata of all Checkpoint File Pairs (i.e. CFP) that exist in storage is stored in an internal array structure referred to as Storage Array. It is a finitely sized (4096 entries in CTP2 and 8192 entries in RTM) array of CFPs to support a cumulative size of 256GB for durable memory-optimized tables in the database. The entries in the storage array ordered by transaction range. The CFPs in the storage array (along with the tail of the log) represent all the on-disk state required to recover a database with memory-optimized tables.

Running an OLTP workload overtime can lead to storage array to fill up leading to many inefficiencies as described here

- Deleted rows – The deleted rows are not actually removed from the data file but a reference to the deleted row is added to the corresponding delta file. This leads to wastage of storage which in turn impacts the recovery time negatively.

- Storage Array Manipulation Overhead – Internal processes search the storage array for operations such as finding the delta file to delete a row. The cost of these operations increases with the number of entries

To alleviate these inefficiencies, the older closed CFPs are merged based on a merge policy (described below) so the storage array is compacted to represent the same set of data, with reduced number of CFPs and the storage.

Merge Operation

The Merge operation takes one or more closed CFPs, called MERGE SOURCE(s), based on an internally defined merge policy, described later, and produces one resultant CFP, called MERGE TARGET. The entries in each delta file of source CFP(s) are used to filter rows from the corresponding data file to remove the data rows that are not needed anymore. The remaining rows in the source CFPs are consolidated into one target CFP. After the merge is complete, the resultant CFP (i.e. the merge-target) replaces the source CFPs (i.e. the merge sources). The merge-source CFPs go through a transition phase before they are eventually removed from the storage.

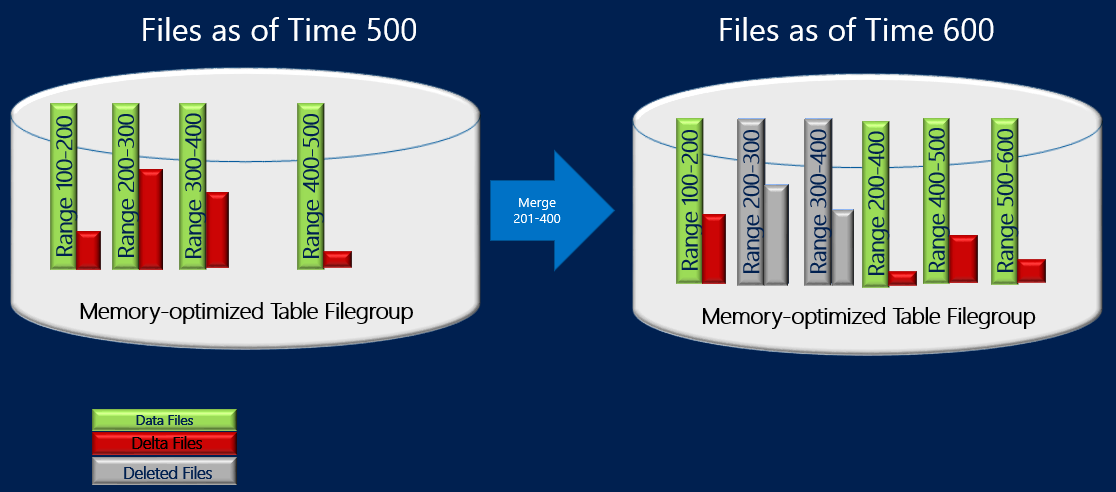

In the example below, the memory-optimized table file group has four data and delta file pairs at timestamp 500 containing data from previous transactions. For example, the rows in the first data file correspond to transactions with timestamp > 100 and <=200 or alternatively represented as (100, 200]. The second and third data files are shown to be less than 50% full after accounting for the rows marked deleted. The merge operation combines these two CFPs and creates a new CFP containing transactions with timestamp > 200 and <=400, which is the combined range of these two CFPs. You see another CFP with range (500, 600] and non-empty delta file for transaction range (200, 400] shows that Merge operation can be done concurrently with transactional activity including deleting more rows from the source CFPs.

In-memory OLTP engine, a background thread evaluates all closed CFPs using a merge policy and then initiates one or more merge requests for the qualifying CFPs. These merge requests are processed by the offline checkpoint thread. The evaluation of merge policy is done periodically and also when a checkpoint is closed.

Merge Policy

SQL Server 2014 implements the following merge policy

- A merge is scheduled if 2 or more consecutive CFPs can be consolidated, after accounting for deleted rows, such that the resultant rows can fit into 1 data of ideal size. The ideal size of data is determined as follows

- For machines < 16GB. The data file is 16MB and delta file is 1MB (not supported in CTP2)

- For machines > 16GB, the data file is 128MB and delta file is 8MB

A maximum of 10 consecutive CFPs can be part of one merge operation.

- A single CFP can be self-merged if the data file is larger than 256 MB and over half of the rows are marked deleted. A data file can grow larger than 128MB if, for example, a single transaction or multiple concurrent transactions insert/update large amount of data. This will result in the data file to grow beyond its ideal size because a transaction(s) cannot span multiple CFPs. Note, Self-merge is not supported in CTP2.

Here are some examples that show the CFPs that will be merged under the merge policy.

|

Adjacent CFPs Source Files (% full) |

Merge Selection |

|

CFP0 (30%), CFP1 (50%), CFP2 (50%), CFP3 (90%) |

(CFP0, CFP1). CFP2 is not chosen as it will make resultant data file > 100% of the ideal size |

|

CFP0 (30%), CFP1 (20%), CFP2 (50%), CFP3 (10%) |

(CFP0, CFP1, CFP2). Files are chosen starting from left. CTP3 is not combined as it will make resultant data file > 100% of the ideal size |

|

CFP0 (80%), CFP1 (30%), CFP2 (10%), CFP3 (40%) |

(CFP1, CFP2, CFP3). Files are chosen starting from left. CFP0 is skipped because if combined with CFP1, the resultant data file will be > 100% of the ideal size |

Not all CFPs with available space qualify for merge. For example, if two adjacent CFPs are 60% full, they will not qualify for merge which will result in 40% of wasted storage for these CFPs. In the worst case, all CFPs can be little over 50% full leading to storage utilization of only 50% approximately. It is important to note that deleted row(s) may exist in the storage, but they may have already been removed (i.e. garbage collected) from the memory. The management of storage and the memory is somewhat independent from garbage collection perspective. A thumb rule is that storage taken by ‘Active’ CFPs (note, it does not account for all CFPs) can be up to 2x larger than the size of durable tables in memory.

You can force merge using stored procedure sys.sp_xtp_merge_checkpoint_files (Transact-SQL) to override the merge policy. For details, please refer to http://msdn.microsoft.com/en-us/library/dn198330(v=sql.120).aspx

Life Cycle of a CFP

As mentioned earlier, once the CFPs are merged, they go through a state transition before they can be deallocated. At any given time, the CFPs data/delta file pairs can be in the following states

- PRECREATED – A small set of CFPs are kept pre-allocated to minimize or eliminate any waits to allocate new files as transactions are being executed. These are full sized with data file size of 128MB and delta file size of 8 MB but contain no data. The number of CFPs is computed as the number of logical processors or schedulers with a minimum of 8. This is a fixed storage overhead in databases with memory-optimized tables

- UNDER CONSTRUCTION – Set of CFPs that store newly inserted and possibly deleted data rows since the last checkpoint.

- ACTIVE – These contain the inserted/deleted rows from previous closed checkpoints. These CFPs contain all required inserted/deleted rows required before applying the active part of the transaction log at the database restart. We expect that size of these CFPs to be approximately 2x of the in-memory size of memory-optimized tables assuming merge operation is keeping up with the transactional workload.

- MERGE TARGET – CFP stores the consolidated data rows from the CFP(s) that were identified by the merge policy. Once the merge is installed, the MERGE TARGET transitions into ACTIVE state

- MERGED SOURCE – Once the merge operation is installed, the source CFPs are marked as MERGED SOURCE. Note, the merge policy evaluator may identify multiple merges a CFP can only participate in one merge operation.

- REQUIRED FOR BACKUP/HA – Once the merge has been installed and the MERGE TARGET CFP is part of durable checkpoint, the merge source CFPs transition into this state. CFPs in this state are needed for operational correctness of the database with memory-optimized table. For example, to recover from a durable checkpoint to go back in time. A CFP can be marked for garbage collection once the log truncation point moves beyond its transaction range.

- IN TRANSITION TO TOMBSTONE – These CFPs are not needed by in-memory OLTP engine can they can be garbage collected. This state indicates that these CFPs are waiting for the background thread to transition them to the next state TOMBSTONE

- TOMBSTONE – These CFPs are waiting to be garbage collected by the filestream garbage collector. Please refer to FS Garbage Collection for details

After accounting for the storage taken by CFPs in various states, the overall storage taken by durable memory-optimized tables can be much larger than 2x. The DMV sys.dm_db_xtp_checkpoint_files can be queried to list all the CFPs in memory-optimized filegroup including the phase they are in. Transitioning CFPs from MERGE_SOURCE state to TOMBSTONE and ultimately getting garbage collected can take up to 5 checkpoints and transaction log backup, if database is configured for full or bulk-logged recovery model, steps. You can, of course, manually force the checkpoint followed by log backup to expedite the garbage collection but then this will add 5 empty CFPs (i.e. 5 data/delta file pairs with data file of size 128MB each). In production scenarios, the automatic checkpoints and log backups taken as part of backup strategy will seamlessly transition CFPs through various states without requiring any manual intervention. The impact of the garbage collection process is that in-memory databases may have a disproportionate storage footprint compared to its size in memory. It is not uncommon to have the size storage occupied by memory-optimized tables to be 3x to their size in memory.

In the next blog, we will walk through an example showing transition of CFPs across all phases.