Using Natively Compiled Stored Procedures in SQL Server 2014

The new In-Memory OLTP feature in SQL Server 2014 greatly optimizes the performance of certain OLTP applications. By using the new memory-optimized tables you can speed up data access, in particular in concurrency situations, due to the lock- and latch-free architecture of the In-Memory OLTP Engine. This means that applications which suffer from a lot of contention between concurrent transactions can greatly benefit from just migrating your hot tables to memory-optimized.

The other part of the equation are the new natively compiled stored procedures, which allow you to speed up query processing and business logic execution. These native procs are T-SQL stored procedures that are compiled to native code, in the form of DLLs, which are linked to the SQL Server process, for very efficient execution. The efficiency in natively compiled procs comes from savings in the execution path by baking the operations into machine code that can interact very efficiently with the In-Memory storage engine. For example, when scanning an index and identifying rows that match a certain predicate, we see that a native proc requires around 1/4th the number of CPU instructions compared with traditional interpreted T-SQL queries and stored procedures.

In this post we walk through some of the considerations when developing and using natively compiled stored procedures in your application.

OLTP-Style Operations

Natively compiled stored procedures are optimized for OLTP-style operations. Now, what do we mean by that? Some characteristics: a) single-threaded execution (MAXDOP=1); b) point lookups and small range scans, no full table scans, in general operations that touch a relatively small number of rows; c) nested-loops joins and stream aggregation; d) short-running transactions, in the ideal case a transaction spans a single execution of a natively compiled stored procedure.

Some examples of OLTP-style operations:

- Inserting a sales order along with its line items.

- Updating the status of a sales order.

- Money transfers between bank accounts.

- Stock purchase.

Native procs are not optimized for reporting-style queries which require joins between and aggregation over large data sets.

ATOMIC Blocks in Native Procs

The body of a natively compiled stored procedure must comprise exactly one ATOMIC block. ATOMIC blocks are a new concept in SQL Server 2014 that can be used only with native procs. The basic thing an ATOMIC block gives you is that all statements within the block either succeed or fail, atomically. In the context of transactions this means that:

- If no transaction exists in the session, the atomic block will start a new transaction

- If a transaction already exists, the atomic block will create a savepoint in the running transaction

- If the block throws an exception, the block is rolled back as a whole

- If execution of the block succeeds, the transaction is committed at the end of the block or, if there was an existing transaction, the savepoint that was created for the atomic block is committed

Because transactions are handled through the ATOMIC block, there is no need to bother with BEGIN TRANSACTION, ROLLBACK, or COMMIT inside natively compiled stored procedures. In fact, that syntax is not supported.

For more details about ATOMIC blocks and transaction and error handling, see the corresponding topic in Books Online.

Retry logic for handling failures

As with all transactions that touch memory-optimized tables, with natively compiled stored procedures you will need to consider retry logic to deal with potential failures such as write conflicts (error 41302) or dependency failures (error 41301). In most applications the failure rate will be low, but it is still necessary to deal with the failures by retrying the transaction. Two suggested ways of implementing retry logic are:

- Client–side retries. This is the recommended way to implement retry logic in the general case. The client application would catch the error thrown by the procedure, and would retry the procedure call. If an existing client application already has retry logic to deal with deadlocks, extending the app to deal with the new error codes is straightforward.

- Using a wrapper stored procedure. The client would call an interpreted T-SQL stored procedure that calls the native proc. The wrapper procedure then uses try/catch logic to catch the error and retry the procedure call if needed. You do need to consider in this case the possibility that results are returned to the client before the failure, and the client would not know to discard them. Therefore, to be safe, it is best to use this method only with native procs that do not return any result sets to the client. In addition, consider that using a wrapper stored procedure does impact performance. For optimal performance, use client-side retries.

For more details on retry logic and the error conditions to consider, see the corresponding topic in Books Online.

Table-Valued Parameters

Like traditional interpreted T-SQL stored procedures, natively compiled stored procedures support table-valued parameters (TVPs), which allow you to pass a rowset into a stored procedure. For example, if you want to insert a sales order along with its line items, you can use a TVP to encapsulate the line items.

The syntax and mechanisms to define and use table-valued parameters with natively compiled procs are the same as for interpreted procs. The only thing you need to take care of, is that you use a memory-optimized table type for the TVP. You can use memory-optimized table types with parameters in both native and interpreted stored procedures.

For more details and an example of the use of TVPs in natively compiled stored procedures, see the Books Online topic on memory-optimized table variables.

Optimizing Client Invocation

In general, you can view a stored procedure execution as consisting of three phases:

Figure 1: Phases of stored procedure execution

- Client invocation. This includes the call from the client, as well as the TDS processing and parsing in the server.

- Query and DML processing. This is the part of the stack that is highly optimized with natively compiled stored procedures, and it is this phase of the stored procedure execution where you will see the gain provided by In-Memory OLTP.

- Validation and commit processing. This includes hardening the commit log record to disk. The length of this phase of the procedure execution is in most cases a function of the latency of the log IO subsystem. If more time is spent writing to the log than processing the queries and DML, the gain to be had from native compilation is going to be limited.

If your application is sensitive to latency, i.e. how long it takes for a single stored procedure to execute, you will also want to optimize how you call the stored procedure from the client, in order to limit the overhead from the call of the stored procedure. Optimizing clients calls of stored procedures is not specific to natively compiled stored procedures, but it does play a bigger role for natively compiled procs, as the client invocation is proportionately a larger part of the overall procedure execution time, due to the optimization in the processing of queries and DML operations.

To optimize stored procedure calls, we recommend:

- Use the ODBC driver in SQL Server Native Client, version 11 or higher.

- Bind the parameters using SQLBindParameter, and use numbered parameters. Using numbered parameters is more efficient than used named parameters.

- Match the types of the parameters passed to the procedure with the types in the procedure definition. This avoids type casting, which introduces additional overhead

- If you need to invoke the procedure multiple times, use prepared execution – first prepare using SQLPrepare, and then execute using SQLExecute. If you need to execute a procedure only once, issue a direct RPC call using SQLExecDirect. Do not use the T-SQL EXECUTE statement.

For an example of both direct and prepared execution with the ODBC driver in SQL Native Client see Books Online here.

T-SQL Surface Area Limitations

SQL Server 2014 has some limitations on the features supported inside natively compiled stored procedures, which you should consider when using these stored procs, and if you want to get the most out of native procs.

Because of these limitations you will see it can be challenging to migrate stored procedures in your existing application to native. We suggest you look for patterns that fit the surface area for native procs and migrate those patterns to native. You do not always need to migrate an entire stored procedure: if the existing stored procedure has a substantial piece of logic that can be migrated to native, you can consider putting only that piece into a new native proc, and modify the existing proc to call the new one. Note that migrating a single statement to a natively compiled stored procedure may not be beneficial due to the overhead of stored procedure invocation – you really want to have a larger subset of the proc that you move to native.

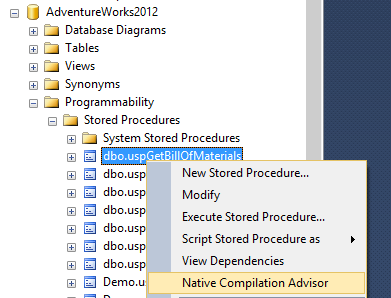

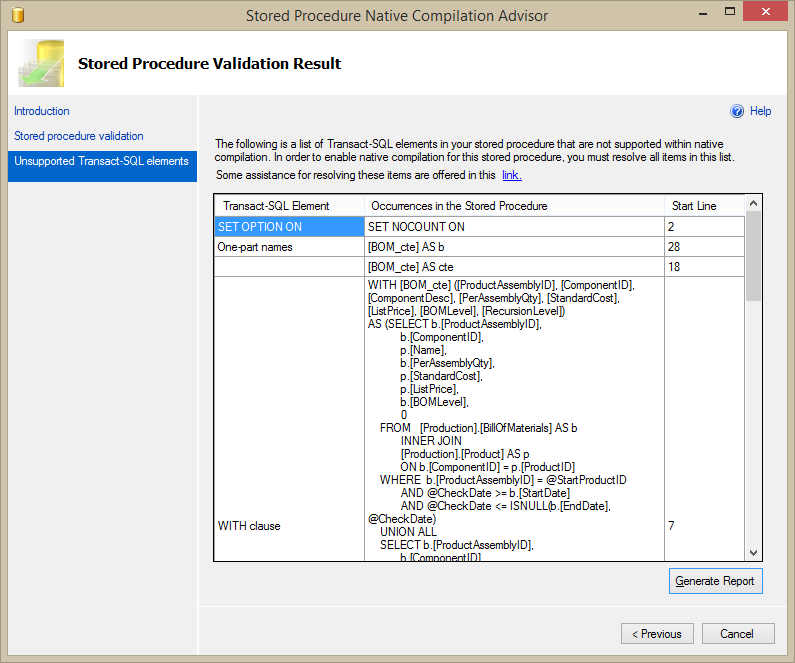

To understand which features in an existing interpreted T-SQL stored procedure are supported in natively compiled stored procedures, we recommend using the Native Compilation Advisor, which is part of Management Studio in SQL Server 2014. The Advisor will tell you which features used in the stored procedure are not supported in native, which will help in identifying the parts of the procedure that can be migrated to native, and will indicate the limitations you may need to work around.

Two following two screenshots show an example of how to use the Advisor with the stored procedure dbo.uspGetBillOfMaterials in AdventureWorks.

Figure 2: Starting the Native Compilation Advisor for dbo.uspGetBillOfMaterials

Figure 3: Results of the Native Compilation Advisor for dbo.uspGetBillOfMaterials

In future blog posts we will go into more detail on how to perform a stored procedure migration given the surface area limitations, and give examples for migrating existing stored procedures to native.