Today, Microsoft announced a significant quantum advancement and made our new Integrated Hybrid feature in Azure Quantum available to the public. This new functionality enables quantum and classical compute to integrate seamlessly together in the cloud—a first for our industry and an important step forward on our path to quantum at scale. Now, researchers can begin developing hybrid quantum applications with a mix of classical and quantum code together that run on one of today’s quantum machines, Quantinuum, in Azure Quantum.

Classical computing has come a long way over the past century to be extraordinarily versatile and has transformed every industry. Even though it will continue to advance, there are certain problems it will never be able to solve. For computational problems that require closely modeling the phenomena of quantum physics, quantum computers will complement classical computers, creating a hybrid architecture that leverages the best characteristics of each design.

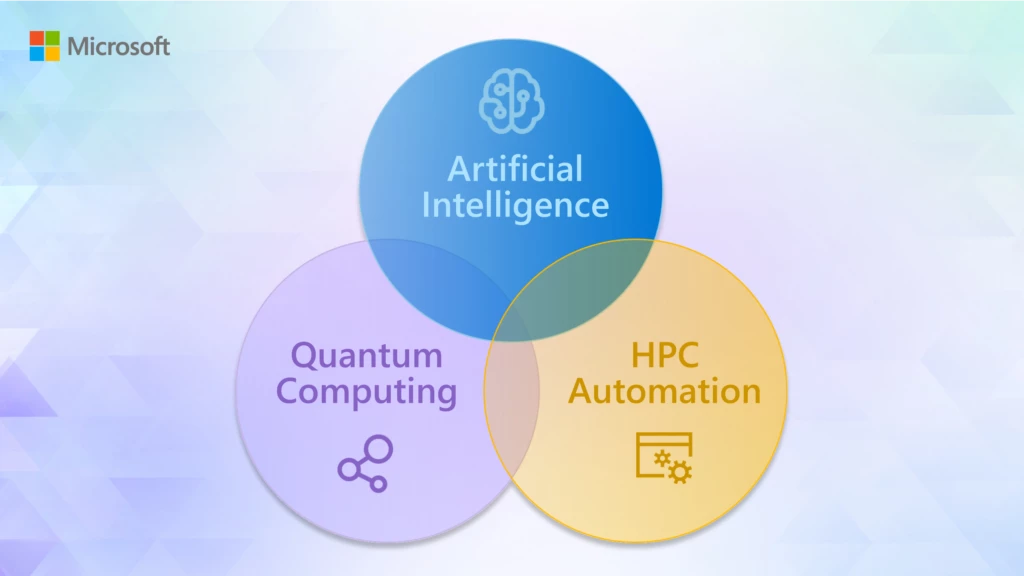

The quantum industry has long understood that quantum computing will always be a hybrid of classical and quantum compute. In fact, it was a key discussion point during this week’s annual American Physical Society (APS) March Meeting in Las Vegas. However, our industry is just starting to grapple with, and design for, the future of hybrid classical and quantum compute at scale in the public cloud. At Microsoft, we are architecting a public cloud with Azure that enables scaled quantum computing to become a reality and then seamlessly delivers the profound benefits of it to our customers. In essence, AI, high-performance computing, and quantum are being co-designed as part of Azure, and this integration will have an impact in three important and surprising ways in the future.

1. The power of the cloud will unlock scaled quantum computing

Quantum at scale is required for scientists to help solve the hardest, most intractable problems our society faces, like reversing climate change and addressing food insecurity. Based on what we know today—largely through our resource estimation work, a machine capable of solving such problems will require at least one million stable and controllable qubits. Microsoft is making progress on a machine capable of this scale every day.

A fundamental part of our plan to reach scale is to integrate our quantum machine alongside supercomputing classical machines in the cloud. A driving force of this design is the reality that the power of the cloud is required to run a fault-tolerant quantum machine. Achieving fault tolerance requires advanced error correction techniques, which basically means making logical qubits from physical qubits. While our unique topological qubit design will greatly enhance our machine’s fault tolerance, advanced software and tremendous compute power will still be required to keep the machine stable.

In fact, to achieve fault tolerance, our quantum machine will be integrated with peta-scale classical compute in Azure and be able to handle bandwidths between quantum and classical that exceed 10-100 terabits per second. At every logical clock cycle of the quantum computer, we need this back and forth with classical computers to keep the quantum computer “alive” and yielding a reliable output solution. You may be surprised with this throughput requirement, but what fault tolerance means for quantum computing at scale is that a machine has to be able to perform a quintillion operations while making at most one error.

To put this number into perspective, imagine each operation was a grain of sand. Then for the machine to be fault tolerant, only a few grains of sand out of every grain of sand on earth could be faulty. Clearly, this type of scale is only enabled by the cloud, making Azure both a key enabler and differentiator of Microsoft’s strategy to bring quantum at scale to the world.

2. The rise of classical compute capabilities in the cloud can help scientists solve quantum mechanical problems today

An incredible benefit of the rise of classical public cloud services is that scientists are able to achieve more at lower costs right now through the power of the cloud. For example, scientists from Microsoft, ETH Zurich, and the Pacific Northwest National Laboratory have recently presented a new automated workflow to leverage the scale of Azure to transform R&D processes in quantum chemistry and materials science. By optimizing the classical simulation code and re-factoring it to be cloud-native, the team achieved 10 times cost reduction for the simulation of a catalytic chemical reaction. These benefits will continue to grow as classical compute capabilities across the cloud advance even further.

Increasingly, we see great potential for high-performance computing and AI to accelerate advancements in chemistry and materials science. Near term, Azure will empower R&D teams with scale and speed. And long term, when we bring our scaled quantum machine to Azure, it will enable greater accuracy in modeling new pharmaceuticals, chemicals, and materials. The opportunity to unlock progress and growth is tremendous when you consider that chemistry and materials science impact 96 percent of manufactured goods and 100 percent of humanity. The key is to move to Azure now to both accelerate progress and future-proof your investments, as Azure is the home of Microsoft’s incredible AI and high-performance computing capabilities today, and for our scaled quantum machine in the future.

3. A hyperscale cloud with AI, HPC, and quantum will create unprecedented opportunities for innovators

It is only when a quantum machine is designed alongside of, and integrated with, the AI supercomputers and scale of Azure, that we will be able to realize the greatest impacts from computing. With Azure, innovators will be able to design and execute a new class of impactful cloud applications that seamlessly bring together AI, HPC, and quantum at scale. For example, imagine the impactful applications in the future that will enable researchers with the scale of AI to sort through massive data sets, the insights from HPC to narrow down options, and the power of quantum at scale to improve model accuracy. These scenarios will only be possible in one application because of the seamless integration of HPC, AI, and quantum in Azure. Realizing this unprecedented opportunity requires advancing this deep integration in Azure today. As we bring HPC and AI together for advanced capabilities, we are also expanding the classical and quantum integration available right now.

Today, Microsoft took a significant step forward towards this vision by making our new Integrated Hybrid feature in Azure Quantum available to the public.

The ability to develop hybrid quantum applications with a mix of classical and quantum code together will empower today’s quantum innovators to create a new class of algorithms. For example, now developers can build algorithms with adaptive phase estimation that can take advantage of performing classical computation, and iterate and adapt while physical qubits are coherent. Students can start learning algorithms without drawing circuits, and by leveraging high-level programming constructs such as branching based on qubits measurements (if statements), loops (for…), calculations, and function calls. Additionally, scientists can now more easily explore ways to advance quantum error correction at the physical level on real hardware. Taken together, a new generation of quantum algorithms and protocols that could only be described in scientific papers can now run elegantly on quantum hardware in the cloud. A major milestone on the journey to scaled quantum computing has been achieved.

Learn more about Azure Quantum

Azure is the place where all of this innovation comes together, ensuring your investments are future-proof. It’s the place to be quantum-ready and quantum-safe, and as the cloud scales, so will your opportunity for impact. Please join Mark Russinovich, Chief Technology Officer and Technical Fellow of Azure at Microsoft, and me as we explore the future of the cloud in an upcoming Microsoft Quantum Innovation Series event.